Claims of cost reductions need to look comprehensively at all costs.

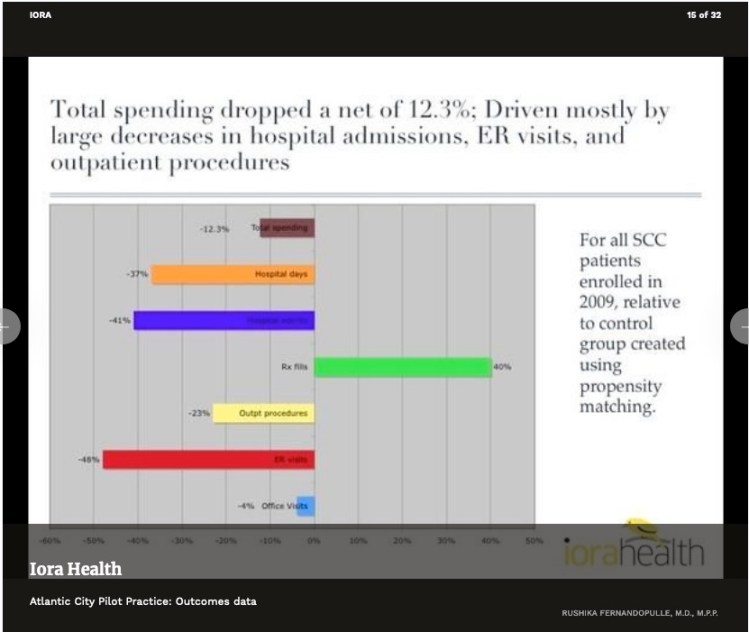

Consider this chart from an Iora presentation of some years ago.

The net drop in spending would look a lot bigger if prescription drugs (the green bar) were not part of the picture. But, a lot of how primary care, direct or otherwise, works is by getting people on the right meds, then getting them fully compliant. When you spend money to save money, you need to look at a net change.

Perhaps the most widely cited claim of cost reductions is Qliance’s 2015 press release claiming overall reductions of about 20%. But their analysis did not cover drug costs. It seems highly probably that somewhat higher drug costs for Qliance’s is a major driver of the reductions in other categories of care. In that case, their overall cost reduction is probably significantly below the 20% they claimed. (And, lest we forget, the Qliance data made no attempt to examine the possibility of selection bias.)

Similarly, consider that Qliance’s 2015 data omitted a category that had been included in their own earlier internal analysis, specifically, surgeries. Qliance’s 2015 evaluation of “overall costs” did not include surgeries. If Qliance patients had just as many surgeries as non-Qliance patients, incorporating that result would lower overall savings. And, just as the case with prescription drugs, there’s some chance that Qliance’s success in reducing other costs might even come from its patients having more surgeries.

So, to generalize, a proper demonstration of success at overall downstream care cost reduction needs to consider all downstream costs to fairly reflect the achievement of direct primary. Cherry-picking of selected reported categories that show improvement gives a misleading picture. Several studies have, to their credit, used comprehensive measures of all downstream cost.

Ideally, studies are repeated at a fair interval or extended for an ample single period. A single snapshot or short-term study, if high or low, will likely regress to the mean when repeated or extended.

A study of a single start-up period may be distorted. It may take more time for the full effect of DPC to develop its full value. On the other hand, there is good reason to expect that a start-up period will pull in a disproportionate share of enrollees who have never had an prior primary, in which case there might be a first year bonus of discovered problems.

Claims about the efficacy of direct primary care providers have a lot more credibility when they report data from direct primary care providers and not from concierge practices. MDVIP is not a direct primary provider and neither is White Glove Health. Yet, they have appeared in pro-DPC advocacy repeatedly.

Study by bona fide independent investigators is much preferred to self-reported brags, for the simple reason that self-studies that don’t favor the self-student are buried. Ultimately, the studies that best show real success are the studies that are designed to show the truth, whether that be success or failure.