The study indiscriminately mixed subscription patients with pay-per-visit patients. Selection bias was self-evident; the study period was brief; and the study cohort tiny. Still, the study suggests that choosing Nextera and its doctors was associated with lower costs; but the study’s core defect prevents the drawing of any conclusions about subscription primary care.

ADDENDUM of January 2021: In effect, for the seven month duration of the study, the average enrollee in the Nextera option faced a deductible more than $600 higher than those who declined Nextera, further skewing results in Nextera’s favor. See new material near bottom of post.

The Nextera/DigitalGlobe “whitepaper” on Nextera Healthcare’s “direct primary care” arrangement for 205 members of a Colorado employer’s health plan is such a landmark that, in his most recent book, an acknowledged thought leader of the DPC community footnotes it twice on the same page, in two consecutive sentences, once as the work of a large DPC provider and a second time, for contrast, as the work of a small DPC provider.

The defining characteristic of direct primary care is that it entails a fixed periodic fee for primary care services, as opposed to fee for service or per visit charges. DPC practitioners, their leadership organizations, and their lobbyists have made a broad, aggressive effort to have that definition inscribed into law at the federal level and in every state .

So why then does the Nextera whitepaper rely on the downstream claims costs of a group of 205 Nextera members, many of whom Nextera allowed to pay a flat per visit rather than having compensation only through than a fixed monthly subscription fee?

This “concession” by Nextera preserved HSA tax advantages for those members. This worked tax-wise because creating a significant marginal cost for each visit in this way actually creates a form of non-subscription practice within the intended medical economic goals for which HDHP/HSA plans were created— in precisely the way that a subscription plan, which puts a zero marginal cost on each visit, cannot.

The core idea is that having more immediate “skin the game” prompts patients to become better shoppers for health care services, and lowers patient costs. Those who pay subscription fees and those who pay per visit fees obviously face very different incentive structures at the primary care level. It would certainly have been interesting to see whether Nextera members who paid under the two different models differed in their primary care utilization.

More importantly, however, precisely because the fee per visit cohort all had HDHP/HSAs, they had enhanced incentives to control their consumption of downstream costs compared to those placed in the subscription plan, who did not have HDHP/HSA accounts. The per-visit cohort can, therefore, reasonably be assumed to have expereinced greater downstream cost reduction per member than their subscription counterparts.

Had the whitepaper broken the plan participants into three groups — non-Nextera, Nextera-subscriber, Nextera per-visit — there is good reason to believe that the subscription model would have come out one of the two losers.

Instead, Nextera analyzed only two groups, with all Nextera members bunched together. And, precisely because the group mixed significant numbers of both fixed fee members and fee for service members, it is logically impossible to say from the given data whether the subscription-based Nextera members experienced downstream cost reduction that were greater than, the same as, or less than the per-visit-based Nextera members. So, while the study does suggest that Nextera clinics are associated with downstream care savings, it could not demonstrate that even a penny of the observed benefit was associated with the subscription direct primary care model.

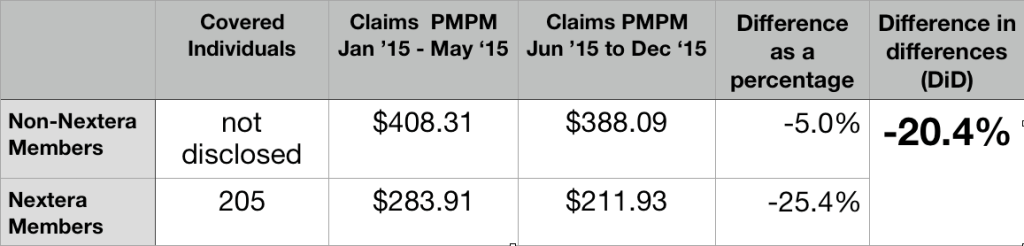

Here are the core data from the Nextera report.

205 members joined Nextera; they had prior claim costs PMPM of $283.11; the others had prior claim costs PMPM of $408.31. This a huge selection effect. The group that selected Nextera had pre-Nextera claims that were over 30% lower than those declining Nextera.

Rather than award itself credit for that evident selection bias, Nextera more reasoanbly relied on a form of “difference in differences” ( DiD) analysis. They credited themselves, instead, for Nextera patients having claims costs decline during seven months of Nextera enrollment by a larger percentage basis (25.4%) than claim cost for their non-Nextera peers (5.0%), which works out to a difference in differences (DiD) of 20.4%.

Again, the data from mixed subscription and per-visit member can only show the beneficial effect of choosing Nextera, rather than declining Nextera. The observed difference appears to be a nice feather in Nextera’s cap; but the data presented is necessarily silent on whether that feather can be associated with a subscription model of care.

It cannot be presumed that Nextera’s success could have been replicated on the DigitalGlobe employees who declined Nextera.

In the time since the report, Nextera has actively claimed that its DigitalGlobe experience demonstrates that it can reduce claim costs by 25%. Nextera should certainly amend that number to the reflect the smaller difference in differences that its report actually shows (20%). But even that substituted claim of 20% cost reduction would require significant qualification before extension to other populations.

Even before they were Nextera members, those who eventually enrolled seem to have had remarkably low claims costs. The Nextera population may be so much different from those who declined Nextera that the trend observed for the Nextera cohort population can not be assumed even for the non-Nextera cohort from DigitalGlobe, let alone for a large, unselected population like the entire insured population of Georgia.

Consider, for example, an important pair of clues from the Nextera report itself: first, Nextera noted that signups were lower than expected, in part because of many employees showed “hesitancy to move away from an existing physicians they were actively engaged with”; second, “[a] surprising number of participants did not have a primary care doctor at the time the DPC program was introduced”.

As further noted in the report, the latter group “began to receive the health-related care and attention they had avoided up until then.”

A glance at Medicare, reminds us that routine screening at the primary care level is uniquely cost-effective for beneficiaries who may previously avoided costly health care. Medicare’s failure to cover regular routine physical examinations is notorious. But there is one reasonably complete physical examination that Medicare does cover: the “Welcome to Medicare” exam.

First attention to a population of “primary care naives” is likely a way to pick the lowest hanging fruit available to primary care. Far more can be harvested from a population enriched with people receiving attention for a first time than from a group enriched with those previously engaged with a PCP.

Accordingly, the 20% difference in differences savings in the Nextera group cannot be automatically extended to the non-Nextera group.

Relatedly, the comparative pre-Nextera claim cost figure may reflect that the Nextera population had a disproportionately high percentage of children, of whom a large number will be “primary care naive” and similarly present a one-time only opportunity for significant returns to initial preventative measures. But a disproportionately high number of children in the Nextera group means a diminished number of children in the remainder — and two groups that could not be presumed to respond identically to Nextera’s particular brand of medicine.

A similar factor might have arisen from the unusual way in which Nextera recruited its enrollees. A group of DigitalGlobe employees with a prior relationship with some Nextera physicians first brought Nextera to DigitalGlobe’s attention and then apparently became part of the enrollee recruiting team. Because of their personalized relationship with particular co-workers and their families, the co-employee recruiters would have been able to identify good matches between the needs of specific potential enrollees and the capabilities of specific Nextera physicians. But this patient panel engineering would result in a population of non-Nextera enrollees that was inherently less amenable to “Nexterity”. Again, it simply cannot can be assumed that the improvement seen with the one group can simply be assumed for any other.

Perhaps most importantly, let us revisit the Nextera report’s own suggestion the difference in populations may have reflected “hesitancy to move away from an existing physician they were actively engaged with”. High claims seem somewhat likely to match active engagement rooted in friendship resulting from frequent proximity. But consider, then, that the frequent promixity itself is likely to be the result of “sticky” chronic diseases that have bound doctor and patient through years of careful management. It seems likely that the same people who stick with their doctors are more likely to have a significantly different and less tractible set of medical conditions than those who have jumped to DPC.

Absent probing data on whether types of different health conditions prevail in the Nextera and non-Nextera populations, it is difficult to draw any firm conclusion about what Nextera might have been able to accomplish with the non-Nextera population.

These kinds of possibilities should be accounted for in any attempt to use the Nextera results to predict downstream cost reductions outcomes for a general population.

Perhaps, the low pre-Nextera claims costs of the group that later elected Nextera reflects nothing more than the Nextera group having a high proportion of price-savvy HDHP/HSA members. If that is the case, Nextera can fairly take credit for making the savvy even savvier. But it cannot be presumed that Nextera could do as well working with a less savvy group or with those who do not have HDHPs.

Whether or not Nextera inadvertently recruited a study population that made Nextera look good, that study population was tiny.

Another basis for caution before taking Nextera’s 20% claim into any broader context is the limited amount of total experience reflected in the Nextera data — seven months experience for 205 Nextera patients. In fact, Nextera’s own report explains that before turning to Nextera, DigitalGlobe approached several larger direct primary care companies (almost certainly including Qliance and Paladina Health); these larger companies declined to participate in the proposed study, perhaps because it was too short and too small. The recent Milliman report was based ten fold greater claims experience – and even then it had too few hospitalizations for statistical significance.

Total claims for the short period of the Nextera experiment were barely over $300,000, the 20% difference in difference for claimed savings comes to about $60,000. That’s a pittance.

Consider that two or three members may have elected to eschew Nextera in May 2015 because, no matter how many primary care visits they might have been anticipating in the coming months, they knew they would hit their yearly out-of-pocket maximum and, therefore, not be any further out of pocket. Maybe one was planning a June maternity stay; another, a June scheduled knee replacement. A third, perhaps, was in hospital because of an automobile accident at the time for election. Did Nextera-abstention of these kinds of cases contribute importantly to pre-Nextera claims cost differentials?

The matter is raised here primarily to suggest the fragility of a purported post-Nextera savings of a mere $60,000 over seven months. An eighth month auto accident, hip replacement, or Cesarean birth could evaporate a huge share of such savings in a single day. The Nextera experience is too small to be reliable.

Nextera has yet to augment the study numbers or duration.

Nextera has not chosen to publish any comparably detailed study of downstream claims reduction experience more recent than 2015 data — whether for DigitalGlobe or or any other group of Nextera patients. That’s a long time.

Nextera now has over one-hundred doctors, a presence in eight different states, and patient numbers in the tens of thousands. Shouldn’t there be newer, more complete, and more revealing data? (Note added in 2021 – In October 2020, Nextera provided new admittedly incomplete data, involving many more members and of longer duration. It was very, very revealing. See this analysis.)

Summation

Because of its short duration and limited number of participants, because it has not been carried forward in time, because of the sharp and unexplained pre-Nextera claims rate differences between the Nextera group and the non-Nextera group, and because its reported cost reduction do not distinguish between subscription members and per-visit members, the Nextera study cannot be relied on as giving a reasonable account of the overall effectiveness of subscription direct primary care in reducing overall care costs.

January 2021 Addendum: An additional study design defect skews results in Nextera’s favor.

June 1 is an odd time to start a health expenditure study, coming as it does near the mid-point of an annual deductible cycle. In the five months prior to the opportunity to enroll in Nextera, those who declined Nextera had combined claims that averaged $2041, while those who opted for Nextera had combined claims of only $1420. The average employer plan in the US in 2015 had a deductible of $1318 for single employee. Whatever the level at Digital Globe, it is quite certain that the group that eschewed Nextera had significantly more members who had already met their 2015 deductible than those in the Nextera group, and more who were near to doing so.

Note that an employee who had already met her annual deductible at the time Nextera became available would have had deductible-free primary care for the rest of the year, whether she joined Nextera or not. She would have gained nothing by choosing Nextera, but may well have had to change her PCP to one of the few on Nextera’s ultra-narrow panel.

More importantly, however, it is well known that, in the aggregate, once patients have cleared their deductible for an annual insurance cycle, they increase their utilization for the rest of the cycle. On the other hand, patients who do not envision meeting their deductible tend to defer utilization. Ask any experienced claims manager what happens in November and December.

Higher relative claims going forward for the non-Nextera group would be entirely predictable even if the Nextera and non-Nextera populations had had precisely equal risk profiles and had received, in the seven-month study period, precisely the same package of primary care services.

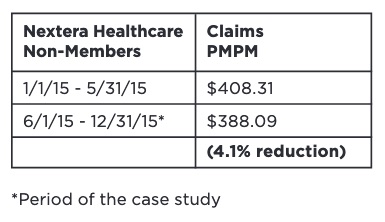

Nextera’s case study also had errors of arithmetic, like this one:

The reduction rounds off to 5.0%, the number I used in the larger table above.