While DPC Coalition features an Iora Clinic in Las Vegas as a data model of the joys of direct primary care, it is simply not representative of a general population. That clinic focused on a very high need population, every member chronically ill. We are looking at people with $11,000 claim levels at 2014 prices; they are “superutilizers” in what is called a “hotspotters” program.

A close look at the very Iora data that the DPC Coalition presented to the United States Senate makes clear how inappropriate it is to use outlier populations.

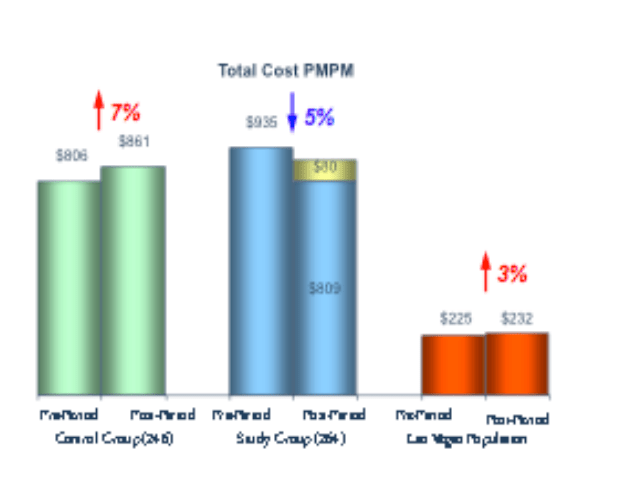

The green bars at left were drawn from what Iora and the DPC Coalition regards as “well-matched controls with equivalently sick populations”. Like the blue bars, which represent the Iora study group, the green bars are clearly far higher than the red bars that represent the general local population (Las Vegas). Since the blue bars are also a bit higher than the green bars, however, the question arises: in what sense were these controls “well-matched controls with equivalently sick populations”. To be meaningful, I suggest, these controls would had to have been matched by some assessment of chronic conditions and risk scores.

But why, if the groups were closely matched, is the pre-treatment bar at $935 for the study group but only $806 for the control group. If the controls were indeed well matched then there must have been some sort of selection effect. I suggest that this was the result of patients being recruited into the Iora program when they presented with a high level of acute exacerbations of their underlying chronic conditions, with the “control group” fashioned retroactively based on those with the same chronic conditions – sans the level of exacerbations that had triggered recruiting.

A treatment year passed. A mix of medical inflation and noise bring the costs for the control group up to $861. But the total costs of the study group at the end of the treatment year, including the fees paid to Iora (shown in gold), are now $889 and still remain higher than the “well matched controls”.

And why have the total costs of the treatment group dropped by 5%? Because they’ve gotten “better” — regressing to the mean in their need for services. Indeed, if the controls had been well matched by risk scores at the start of the year, this is entirely predictable.

Is this wild speculation on my part? Hardly. A NEJM research piece noted regression to the mean of matched controls when a study of one of the pioneering superutilizer programs (Camden) showed no difference in hospitalization rates during the original study period between plan members and non-members. Sure, there might be some other explanation for the exact levels and changes seen in the bar graphs.

But what remains is this: During the treatment period, the total costs of the Iora study group, including the fees paid to Iora, were 3% more than the total costs of what Iora claimed were well matched controls.

If “well matched controls” means anything sensible, the Iora study does not demonstrate a net cost reduction.

Back in the 90s, I did some federal legislative advocacy on health policy. I once asked Representative Sander Levin (D -Michigan) to present an amendment that would swing about $15 Billion in the direction of low income citizens.

“I’d love to do that, Mr. Ratner. But you’ll have to show me a $15 Billion offset. If you can find it, get it to me this week.”

I actually found the money. The CBO had missed a $15 billion item in scoring the bill. You can bet I busted my ass (and confirmed my understanding with my betters like Stan Dorn) to make sure that I was not casually handing a load of careless bullshit to a federal legislator.

I guess times have changed.